Karsten Jeschkies Just a Page About Stuff I Do

Master's Thesis: News Filtering Based on Intrinsic User Feedback and a Modified ESA Model

Abstract

Today’s news travels within seconds across earth. While in the past it took messengers days, or a ticker minutes, today it takes just a Tweet to get a news break across the globe. The fast travel of news also allows for the gathering of a lot of news. News websites are cluttered with articles on many topics even if they focus for example on only technology related news. It becomes more confusing to stay informed on the topics of interest. There is just too much noise of information.

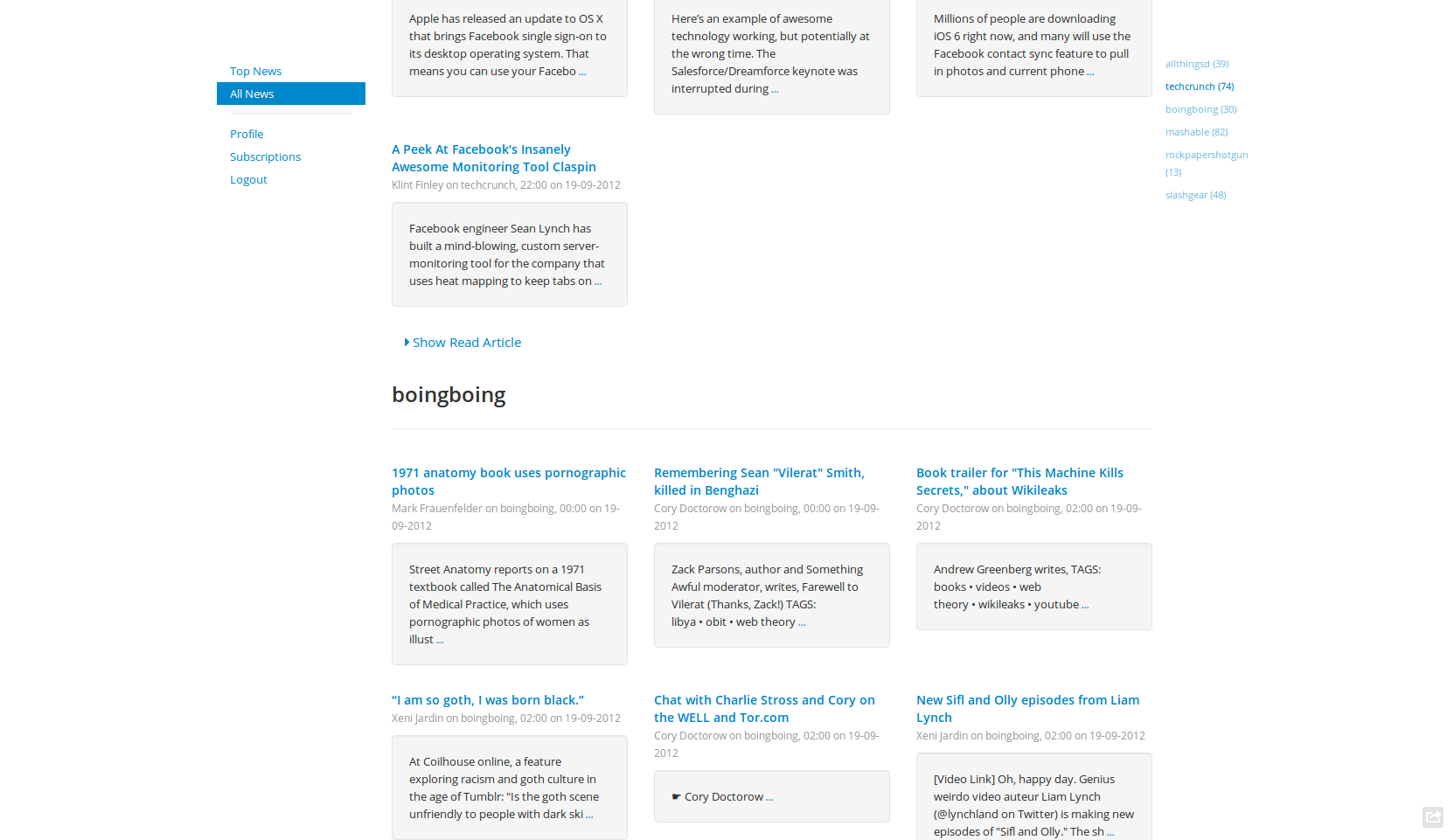

In this thesis I will present a news filtering system in form of a website that filters out the noisy uninteresting news and will help the consumer to focus on the news she is interested in.

The filtering is reduced to a classification problem of text documents. In this thesis I compare different classification algorithms with the text features models TF-IDF, LDA, and a modified ESA which is introduced in this thesis.

Tests on the Reuters-21578 data set show that all algorithms yield good results and that the modified ESA model performs best. Tests on user data gathered during a five months period show that simple classification cannot solve the filtering problem alone. It seems that individual user data needs to be enriched with collaborative user data.

Details

I implemented the system mostly in Python using gensim, Scikit-learn, Scipy, Numpy. The front end uses Flask and Twitter Bootstrap. Most parts of the system communicate via STOMP and its server implementation CoilMQ.

The crawler is written in Ruby.

I added an implementation of a modified ESA model to gensim.

Some calculations were done on an EC2 instance.

You can download or fork the complete source code here and find the thesis here.

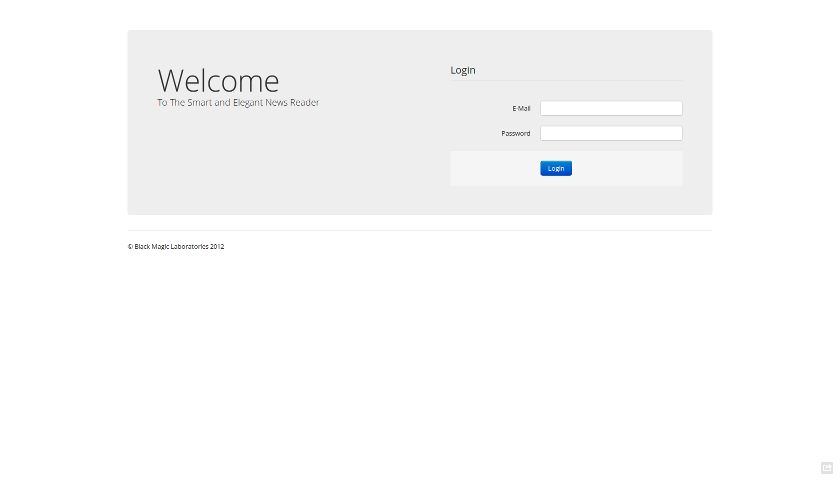

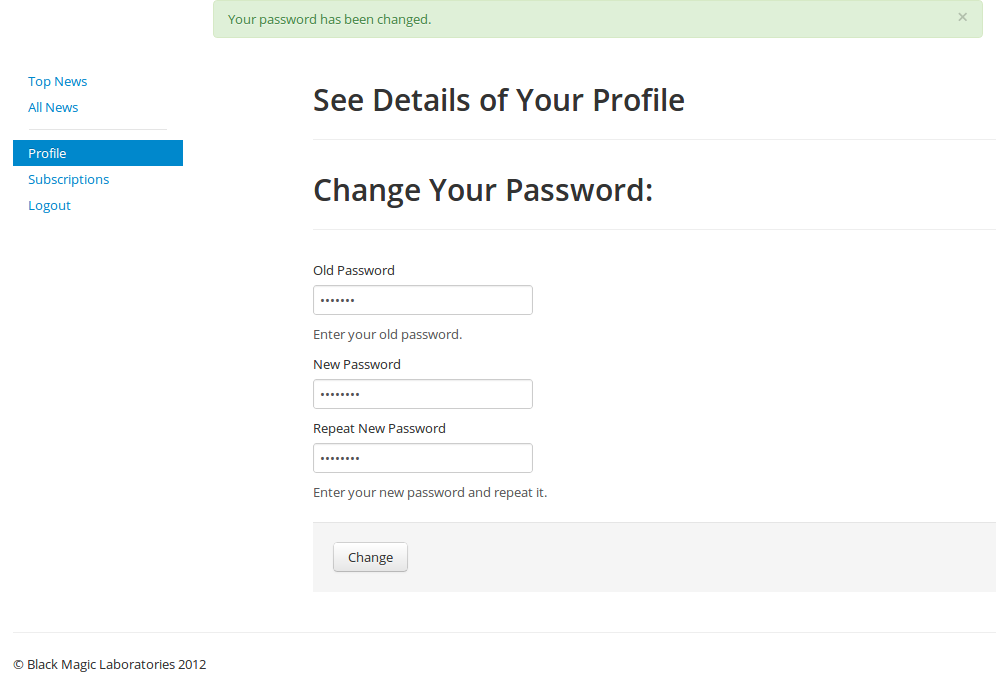

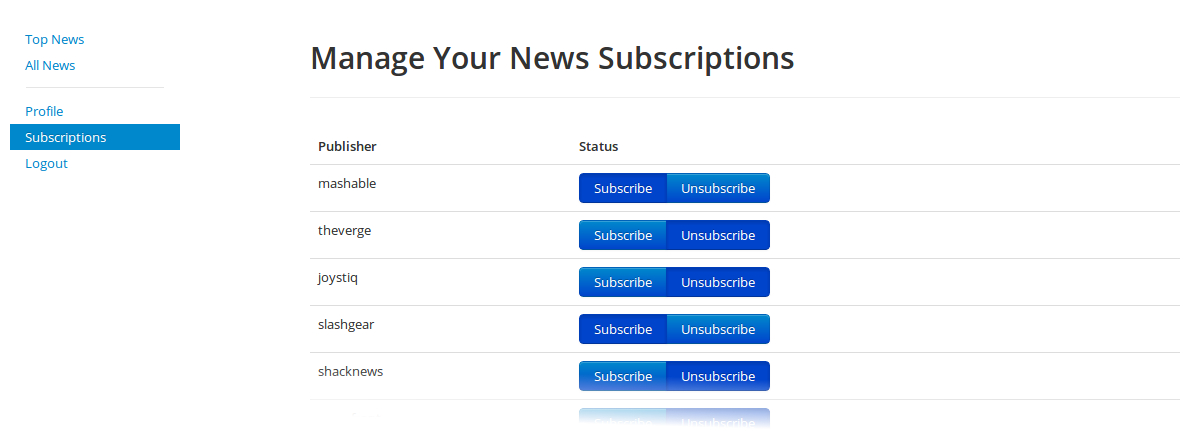

Front End Screenshots